I noticed from the pictures, the PCB is shorter on the 1060 than the 1070 and 1080. Just like the RX 480. I would expect to see some coolers with ITX in mind... Which is exactly what I'm thinking about for my next system. Although I'm more interested in a small form factor ITX card along the lines of what Gigabyte are putting out.

I'm wondering if AMD have held back the RX 490 to destroy the 1060 at the ~$300 range so as not to be upstaged by nVidia. Officially there is no RX 490.. But unofficially it keeps popping up on various manufacturers sites and then disappearing when people spot the name.

I'm wondering if AMD have held back the RX 490 to destroy the 1060 at the ~$300 range so as not to be upstaged by nVidia. Officially there is no RX 490.. But unofficially it keeps popping up on various manufacturers sites and then disappearing when people spot the name.

You see it is not RX 480 GPU that is problem, but more memory plus higher clocked memory on board.

You see it is not RX 480 GPU that is problem, but more memory plus higher clocked memory on board.

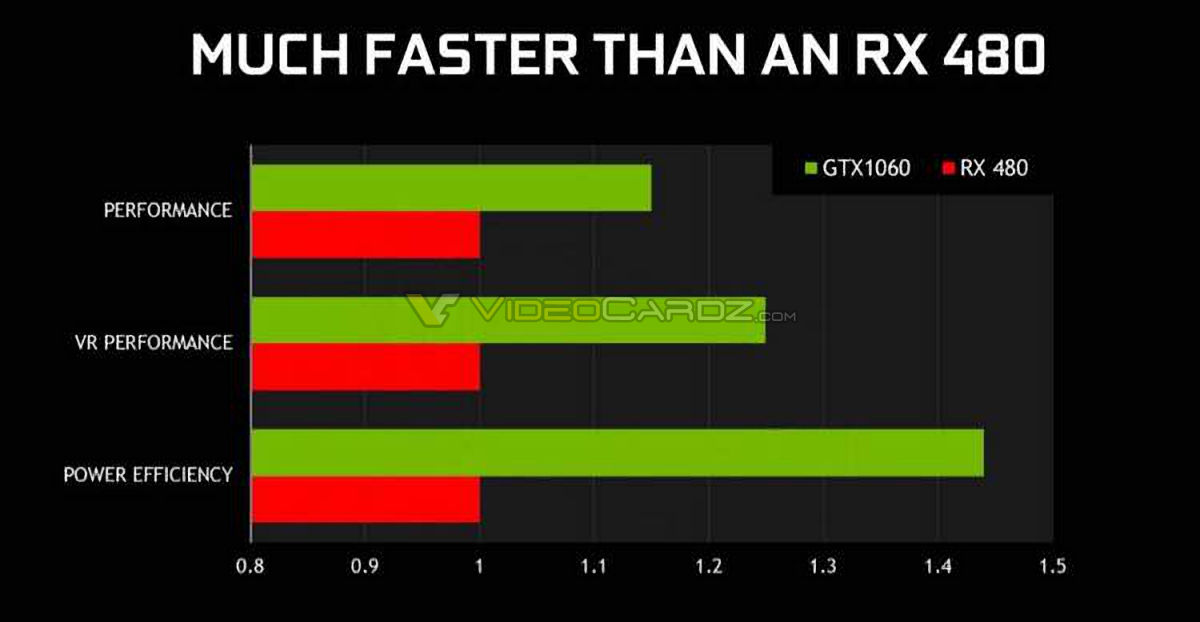

So they claim 15% more perf over RX 480 (that MUCH FASTER THEN AN RX 480 - actually means 15% faster claim), 24% more VR perf and 43% power efficiency. According to that i claim, on Linux it will be 30% perf diff with near same power efficiency

So they claim 15% more perf over RX 480 (that MUCH FASTER THEN AN RX 480 - actually means 15% faster claim), 24% more VR perf and 43% power efficiency. According to that i claim, on Linux it will be 30% perf diff with near same power efficiency

Comment