Announcement

Collapse

No announcement yet.

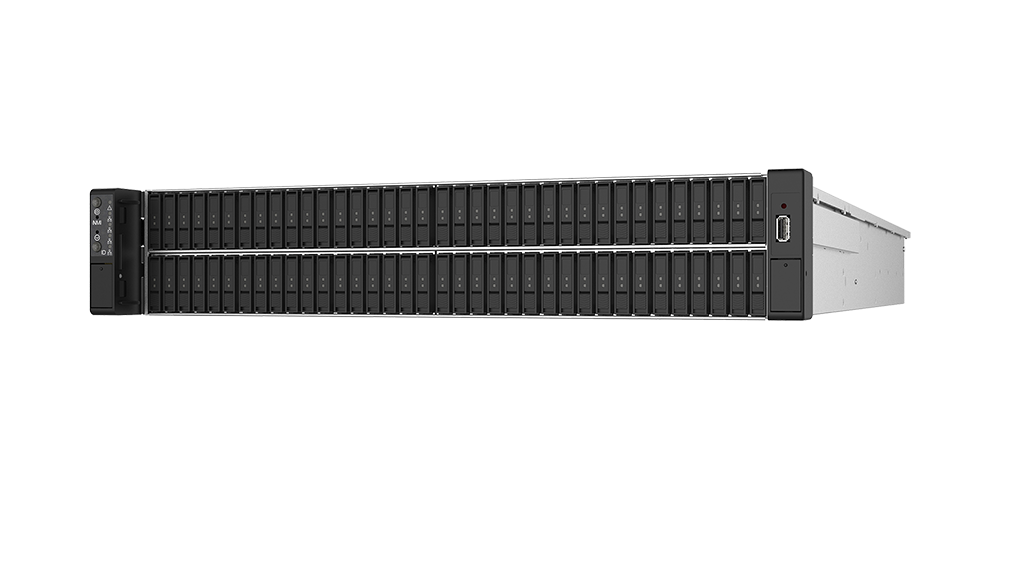

Google Has A Problem With Linux Server Reboots Too Slow Due To Too Many NVMe Drives

Collapse

X

-

Hyperscalers have hyperscalers issues (of around a terabyte of memory). While my desktop is not going to have a terabyte of memory any time soon, the problems of the HPC and Hyperscalers today will be seen on workstations in a decade or less, so improvements today are beneficial.Originally posted by F.Ultra View Post

AFAIK it can't be anything else than a write back cache that causes the NVMe driver to wait so the question you should ask yourself is what crazy sized write back caches that Google uses that takes 4.5 seconds to flush on a NVMe...

- Likes 4

Comment

-

In the hyperscaler cloud space one will find the hyperscaler reboots servers (including bare metal servers) all the time, and every minute lost can be (substantial) lost revenue at hyperscale (along with annoyed customers who want their systems available now, now now).Originally posted by dekernel View PostGuess I would be interested in knowing just what OS you are using

- Likes 2

Comment

-

If you have, let’s say, 500k servers. The shaving off 30 seconds from one shutdown saves about half a year of time overallOriginally posted by caligula View PostWow, so many comments and nobody is wondering why few seconds matter. After all you'll reboot a desktop only once per day, servers have uptime of months. So shouldn't matter even if it takes few hours to boot.

- Likes 1

Comment

-

It looks to me like an issue that is very complex and hard to get right.Originally posted by mdedetrich View Post

Yeah I get the general impression with Linux is that as an "OS" (and I am using that term loosely) it didn't really embrace the async paradigm, a lot of code appears to be written to just block until it gets some response.

I notice this somewhat frequently, for example one off the top of my head is that NetworkManager when its connecting/disconnecting to different networks appears to block/freeze the UI. Being predominantly written in C also didn't help in this regard because doing this kind of programming in C is really hard (languages like C++/Rust allow you to provide higher-level abstractions in libraries to simplify this a lot).

I'm also unsure that only async is the right approach considering the long term. Some questions about priority and concurrency remain yet:- What about a driver/task that is using data in an async NVme drive? It will fail? It will force the shutdown process back to a serial process?

- What is the correct order of shutdown on every Linux box?

- Apps, users, networks, filesystems, then keyboard/user-input and for last display?

- Apps, users, keyboard/user-input, filesystems, networks, display, and finally filesystems?

- Or maybe it will depend if:

- the system is a smaller Raspberry PI or a larger weather forecast cluster node?

- the architecture is ARM, x86, MIPS, or RISCV?

- the system is optimized to sleep/hibernate rather than shutdown/restart?

Commonly the order of deactivation is the inverse order of activation. But in a modern SO like Linux, that has become way too complex because many drivers, hardware, and dependencies can be plugged in at any time by users and processes.

Also, the myriad of architectures and use cases that Linux supports, ranging from the smallest IoT and embedded devices to enormous servers and clusters requires that the order and the concurrency may be tackled in different ways, depending on the setup.

I won't be surprised if people further propose a "micro-systemd" or a "mini-upstart" inside the kernel for handling concurrent boot and shutdown before the kernel delegates control to PID 1.

Comment

-

-

And even if it's not for us, what is wrong with them scratching their own itches? As long as it's not in detriment of others, isn't that part of the point of using open source?Originally posted by CommunityMember View Post

In the hyperscaler cloud space one will find the hyperscaler reboots servers (including bare metal servers) all the time, and every minute lost can be (substantial) lost revenue at hyperscale (along with annoyed customers who want their systems available now, now now).

- Likes 2

Comment

Comment