Originally posted by sykobee

View Post

Announcement

Collapse

No announcement yet.

Apple M1 Ultra With 20 CPU Cores, 64 Core GPU, 32 Core Neural Engine, Up To 128GB Memory

Collapse

X

-

I see, that makes logical sense. So if given enough time and man hours, knowing the commands a fully-functional reverse engineered GPU driver can ultimately be developed over the years.Originally posted by Ladis View PostThe opensource defelopers are catching the commands the driver in macOS calls to the GPU. It's easy since they run the original macOS in a virtual machine running inside the bare metal Linux. The problem of Nouveau is the missing relocking because of the missing firmware. That also demotivates more developers to work on the opensource driver.

So in some years an M1 will have better open source support than Nvidia and in theory as good as AMD or Intel if the motivation is there to accomplish it.

Comment

-

Seriously, how often do most people upgrade their PCs? It's hard to do so because new CPUs often need a new socket (and even if they fit they may not work, eg. my 3700X motherboard does not accept Ryzen 5000). I can't upgrade to DDR5 or PCIe 4.0 either. So all I'm left with is increasing DRAM or a new SSD - and by the time I need to I might as well replace everything and get the benefit of faster memory, SSD and CPU as well.Originally posted by mppix View Post

assuming you never need to add memory, or change processor, change GPU, .......

- Likes 2

Comment

-

And in majority form factors sold by Apple, you can't upgrade on PCs much more. Half the computers have everything soldered, too. Maybe storage cen be replaced, maybe one RAM slot.Originally posted by PerformanceExpert View Post

Seriously, how often do most people upgrade their PCs? It's hard to do so because new CPUs often need a new socket (and even if they fit they may not work, eg. my 3700X motherboard does not accept Ryzen 5000). I can't upgrade to DDR5 or PCIe 4.0 either. So all I'm left with is increasing DRAM or a new SSD - and by the time I need to I might as well replace everything and get the benefit of faster memory, SSD and CPU as well.

Comment

-

The numbers on paper, and performance in real world applications are often two very different things. Intel's Pentium 4 for example, which had sky-high clock speeds for its time, was trounced by AMD's lower-TDP, lower-clocked, lower-cost Athlon 64. And don't forget the Itanium, an industry-beating marvel of a chip on paper, was absolute trash in the real world and couldn't even compete with AMD's Opteron which cost a tiny fraction of Itanium's price.Originally posted by JEBjames View PostI'm not a big apple fan.

But even with a bit of extra marketing extra hype sauce on top those specs look good.

That said, the PowerMac G5 systems destroyed the top wintel peecee's of the day, so clearly Apple has some experience in building highly performant non-x86 machines. The problem with Apple remains the same however, in that you're limited to only running OSX. Fine for some folks, not for others. And with Apple's hostility towards alternative operating systems, the chances of a FOSS OS bring-up during the product's lifetime are slim to none.

Comment

-

1. That's why we have real life tests from experts using their computers for their job. Of course half the perfomance on Apple is special circuits, but those were specifically chosen for their target groups. And the brute CPU performance is not small either, in fact the top among ARM CPUs from others. That Pentium 4 was a fail, because Intel focused only on the frequency, not performance. The high clock rate required long pipeline, which cost a lot of time to empty when a branch prediction failed. Itanium could be twice as fast if HP wanted, but they didn't want to spend any money on improvement of Itanium. They wanted just support legacy systems (was in the time engineers knew the Itanium's way was not the best).Originally posted by torsionbar28 View PostThe numbers on paper, and performance in real world applications are often two very different things. Intel's Pentium 4 for example, which had sky-high clock speeds for its time, was trounced by AMD's lower-TDP, lower-clocked, lower-cost Athlon 64. And don't forget the Itanium, an industry-beating marvel of a chip on paper, was absolute trash in the real world and couldn't even compete with AMD's Opteron which cost a tiny fraction of Itanium's price.

That said, the PowerMac G5 systems destroyed the top wintel peecee's of the day, so clearly Apple has some experience in building highly performant non-x86 machines. The problem with Apple remains the same however, in that you're limited to only running OSX. Fine for some folks, not for others. And with Apple's hostility towards alternative operating systems, the chances of a FOSS OS bring-up during the product's lifetime are slim to none.

2. G5 was better than x86, but IBM was not interested to spend money on improving it, so sooner or later the still-developing x86 became better. BTW you're not limited to macOS on Apple. I myself run also Linux not only on my x86 Macbook, but also on my G4 iBook. I even run Windows in emulation on PowerPC G4 (fun fact - in Microsoft Virtual PC software). And the improving Linux support on M1 is covered here in articles on Phoronix. If you want a hostile company about FOSS, look at NVidia, which will not give you firmware for your GPU no matter what. Apple loads it for you, even if you want to use your own OS.

- Likes 2

Comment

-

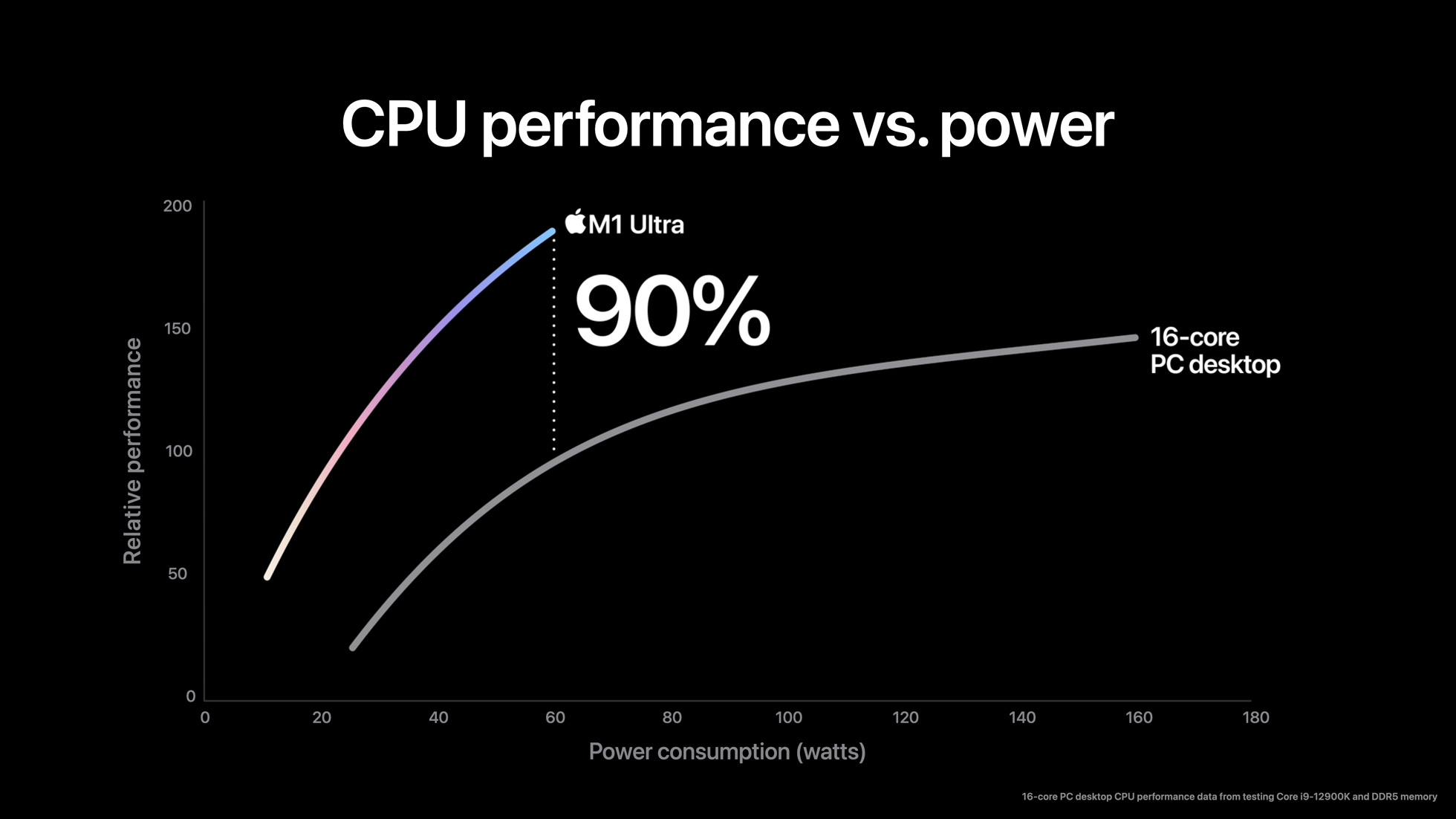

We don't know what workload(s) they used to collect this data, but it's a sweep of the performance across all power thresholds -- not only PL2!Originally posted by drakonas777 View PostRegarding efficiency you should keep in mind, that in 16C maximum performance case for example, they were using 12900K at PL2, which is really low efficiency bar to cross in this particular use case. Don't take all Apple benchmarks for a face value, most of their "Xs" and "percents" are quite inflated. Wait for independent benchmarks, especially real life workload based ones.

If these numbers are remotely accurate, it's a much bigger gap than just due to manufacturing. It's the kind of efficiency gap you only get between a microarchitecture that was designed for efficient performance from day 1 vs. Intel's desperate scramble to retake the x86 performance crown.Originally posted by drakonas777 View PostAlso, TSMC N5 is about one and a half node ahead of Intel 7,

We'll have to get some independent testing, but recent Apple cores have always delivered far better perf/W and perf/clock on the full spectrum of benchmarks, including generic ones like SPEC 2017. No, "special accelerators" or "tight integration" in sight.Originally posted by drakonas777 View Postpeople tend to believe that M1s are some magical chips lightyears ahead of x86. They are not. Reality is they use superior node, rely on special purpose accelerators a lot and have tight integration with Apple SW stack.

True, but he's been out of the x86 game since like 2015, when a 4-way decoder was more than wide enough. I don't trust he's going to have terribly good insight into what bottlenecks x86 CPU designers are struggling with, today.Originally posted by drakonas777 View PostARM ISA is not inherently vastly superior to x86 - you can google Jim Keller's talks on this matter.

This hasn't been true before and I see no reason to believe it will be true in future. You can go back and look at perf/W of Apple SoCs on TSMC 7 nm and it's still much better than AMD CPUs on the same node.Originally posted by drakonas777 View PostUpcoming TSMC N5 / INTEL 5 x86 cores will be at the very similar efficiency level,

x86 is at an intrinsic disadvantage. The decoder is inefficient and a bottleneck, plus having ~half the GP registers limits ILP and forces spills that wouldn't happen on ARM.

- Likes 1

Comment

-

Assuming Apple doesn't call the police and have you evicted (i.e. start refusing to boot any OS image they didn't personally sign).Originally posted by ezst036 View PostSo in some years an M1 will have better open source support than Nvidia and in theory as good as AMD or Intel if the motivation is there to accomplish it.

Remember, the Linux community is camping on Apple's lawn, just outside their walled garden.

For now.Originally posted by Ladis View PostIf you want a hostile company about FOSS, look at NVidia, which will not give you firmware for your GPU no matter what. Apple loads it for you, even if you want to use your own OS.

Some here will remember how Sony supported other OSes on the PS3... until they didn't. That's life on a closed platform.Last edited by coder; 09 March 2022, 04:20 PM.

- Likes 1

Comment

-

In normal times, you could buy a new GPU. I typically have upgraded GPUs about twice as often as the motherboard + CPU + RAM. Most new GPUs still perform almost as well on PCIe 3.0 x16.Originally posted by PerformanceExpert View PostI can't upgrade to DDR5 or PCIe 4.0 either. So all I'm left with is increasing DRAM or a new SSD

Storage is another thing I buy at a faster rate than general upgrades.

Comment

-

Even the Mac Pros have special Thunderbolt-enabled video cards and feature maximum Apple price-gouging.Originally posted by Ladis View PostAnd in majority form factors sold by Apple, you can't upgrade on PCs much more.

And, of course, you wouldn't want to mess up the highly-engineered chassis airflow by putting in a 3rd party card, would you?

- Likes 1

Comment

Comment